Russian Developers Propose New Method to Test AI for Truthfulness

A new open framework called DRAGOn evaluates how accurately AI assistants respond using up-to-date data and external sources.

Developers from SberAI, MWS AI, and several Russian universities have introduced an open methodology for testing Russian-language AI assistants that rely on search and external data sources. The framework is called DRAGOn.

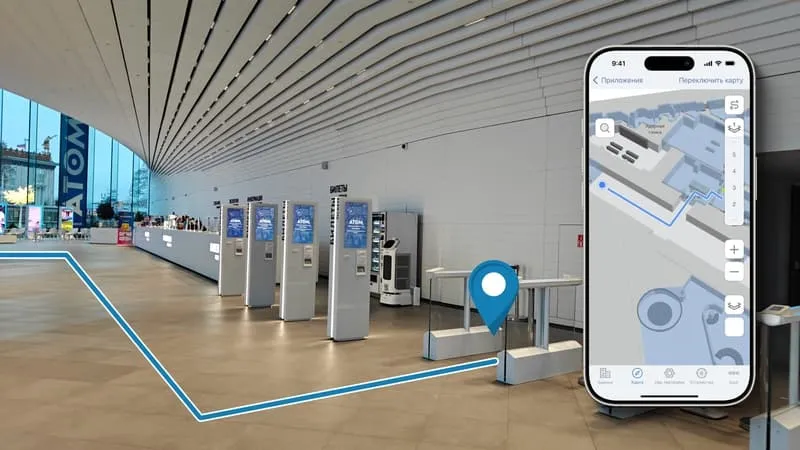

The approach targets AI systems used in corporate environments. These systems draw on internal knowledge bases and provide answers based on current data, rather than generating unsupported responses, a common issue with baseline models.

Why AI Hallucinates

Traditional evaluations rely on fixed datasets that quickly become outdated. Over time, these datasets may be included in model training, reducing the value of the tests.

The problem is compounded by the fact that standard benchmarks do not account for company-specific contexts, making generalized evaluations less meaningful.

How DRAGOn Works

The system is built around continuously updated data streams. DRAGOn aggregates fresh news feeds and structures them into a fact-based framework used to generate evaluation tasks. Instead of simple questions, it presents complex logical challenges that require AI systems to synthesize information from multiple sources.

A separate neural model performs the evaluation, assessing responses based on meaning and completeness rather than word-level matching.

What It Means for Business

Companies can deploy the tool within their own infrastructure and test AI behavior using real internal data before launch.

This provides a clearer picture of how systems perform in customer service, analytics, or document workflows, and allows for comparisons across models using consistent criteria rather than abstract benchmarks.

Developer Collaboration

The project involved specialists from Sberbank, MWS AI, and several universities, including ITMO University, MISIS, HSE University, MBZUAI, IITU, and Yandex School of Data Analysis.

Developers have also launched an open ranking of Russian-language RAG systems. Early results show that combinations of multiple models with enhanced retrieval perform best, although even these systems still struggle with complex logical relationships in continuously updated data streams.