MEPhI Develops Neural Network Built to Withstand Data Poisoning Attacks

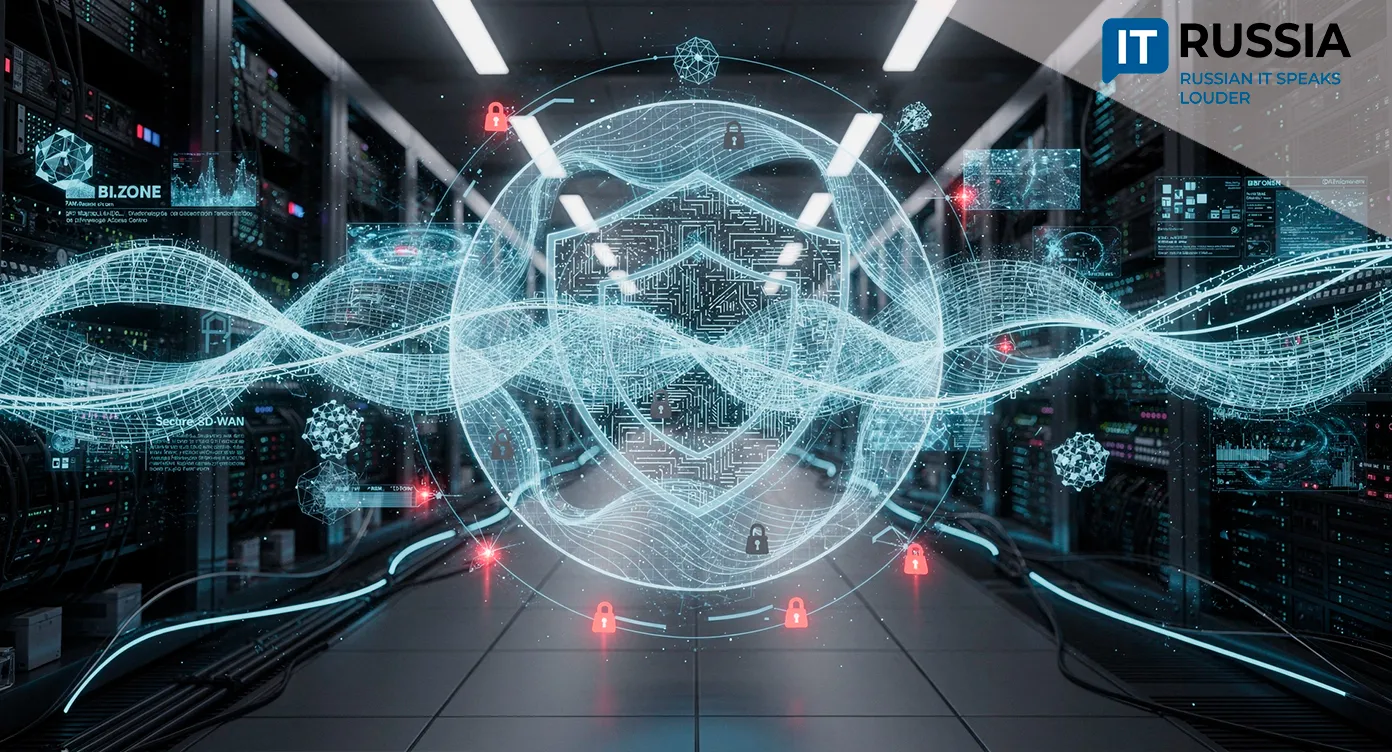

Researchers at Natsionalnyy issledovatelskiy yadernyy universitet MEPhI (National Research Nuclear University MEPhI) developed a new architecture, MambaShield, for deployment in security-sensitive environments, including server infrastructure, banking, healthcare and industrial systems.

The system addresses a core security risk: data poisoning, where attackers inject malicious data into training datasets to manipulate system behavior.

MambaShield was published in the international journal Expert Systems with Applications (2026). The study reports that standard models can lose accuracy from 95% to 40% or lower under poisoning attacks, while MambaShield is presented as a more resilient architecture with linear complexity based on selective state space models.

The research brings together AI and cybersecurity and signals a shift toward infrastructure-level security. Protecting machine learning systems is becoming a distinct market segment. NIST classifies poisoning attacks as a baseline threat alongside evasion and privacy attacks, highlighting the global relevance of the issue.

End users benefit indirectly. More resilient models result in more reliable digital services in banking, healthcare and other sectors. For Russia, the development creates export opportunities in AI security. Globally, it reflects a broader shift from measuring model performance to prioritizing robustness.

Foundation for International Validation

The development creates export opportunities for Russian AI developers, particularly in the form of expertise and B2B or B2G modules for secure AI and ML systems. Publication in Expert Systems with Applications with a DOI supports international validation and allows the work to be independently verified and incorporated into the global research discourse on adversarial machine learning.

The domestic market potential is higher. Use cases include intrusion detection systems, antifraud tools, industrial network monitoring, secure medical and banking modules and protection mechanisms for critical information infrastructure. Demand for these technologies is growing as the cyberthreat landscape becomes more complex and attackers increasingly use neural networks for reconnaissance, vulnerability discovery and bypassing defenses.

The outlook depends on pilot deployments with industrial customers, integration into existing cybersecurity stacks and demonstrated resilience at acceptable cost levels. Validation across diverse datasets and attack types is also critical. At the same time, this line of research could help build a broader AI security ecosystem, including laboratories, applied products and educational programs.

The Next Wave of Defense

In 2024, MEPhI published research titled Improved Robust Adversarial Model against Evasion Attacks on Intrusion Detection Systems, which focused on improving resilience of intrusion detection systems to evasion attacks. MambaShield continues that line of research in developing robust AI for cybersecurity. In the same year, ITMO University and Raft launched LLM Security Lab to build expertise and train specialists in AI security. In 2025, the lab unveiled HiveTrace, a system designed to protect generative AI applications from prompt injection, data leakage and other vulnerabilities. Unlike HiveTrace, MambaShield focuses on improving model resilience and the training process itself against poisoning attacks. Together, these efforts show the emergence of multiple tracks in AI security development within the country.

In 2024, NIST updated its core guidance on adversarial machine learning, identifying poisoning attacks as one of the key threat classes. A year later, research interest increased around intrusion detection systems resilient to poisoning attacks, including in federated learning and IoT scenarios. By 2026, Microsoft reported that attackers were using AI across all stages of cyberattacks. This trend makes technologies that make AI systems resilient to deliberate manipulation of data and environments more valuable. In that context, MambaShield is part of a new wave of defensive technologies.

Russia’s Role in the Global AI Security Race

Domestic teams are targeting a critical niche focused on securing AI and ML models themselves. The sector will likely grow faster than the broader AI market, as trust, resilience and certification are prerequisites for scaling AI in banking, healthcare, government and industrial environments.

The development highlights Russia’s role in the global AI security race, emphasizing the connection between rising cyber threats and the emergence of new defensive architectures. It also signals increasing competition between academic research and future industrial products.

In the near term, the project is likely to remain at the stage of academic publication and pilot deployments. However, if it progresses to industry adoption, it could be used to build modules for SOC platforms, antifraud systems, traffic monitoring and secure AI environments in critical infrastructure. The most likely path to commercialization involves partnerships with cybersecurity vendors and large enterprise customers.